Last week, three of us sat on a video call and rearchitected the entire user interface of an internal Salesforce monitoring platform we've been building. New page hierarchy. Inline findings. Different rule binding logic. The works.

It took thirty minutes.

If I posted that on LinkedIn with screenshots of before and after, you'd recognize the genre immediately. "Built a platform UI in 30 minutes with AI." Three thousand likes. A few hundred comments arguing about it. At least one CTO posting a thread thirty seconds later about how their team did something similar in twenty.

I'm not going to write that post. The thirty minutes happened, but it isn't the interesting part.

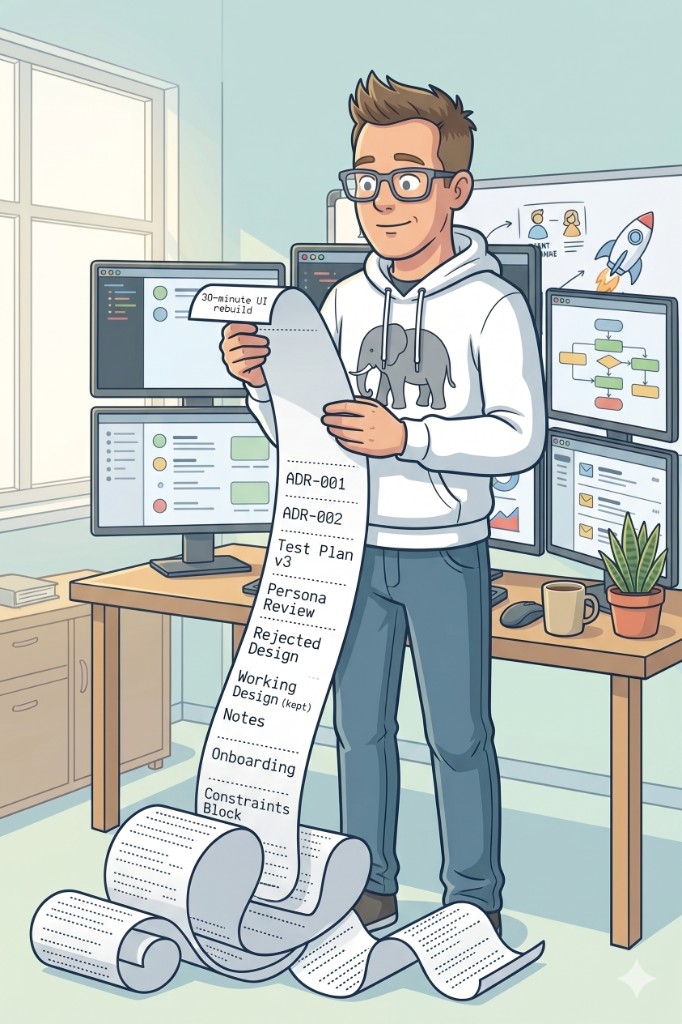

The interesting part is what was true at the end of those thirty minutes. The new design was documented. The test plan was updated. The rejected design was captured alongside the new one, with the reasoning we'd just talked through. The next person to open the project the following morning could read where we landed and where we'd considered going. Nobody had to redo any of it.

That second list, not the thirty minutes, is what AI is actually doing for the way I work right now.

The headline that gets the causality wrong

The genre of "I built X in Y" posts measures the wrong thing. They tell you what the stopwatch said. They don't tell you what's in the artifact at the end.

What I'm finding, two-plus years into using AI tools across real projects, is that the artifact is the actual story. The work I'm shipping right now is more thoughtful than the work I was shipping pre-AI. Better-documented. Better-tested. With clearer trails of why we chose what we chose. The timeline is shorter too, but the timeline is downstream of the discipline. Not the other way around.

I want to be specific about this, because "AI makes you better" reads like the kind of vague affirmation I'd normally roll my eyes at. So let me show you what I mean across two real builds. One the LinkedIn version of AI would call fast. One it would call slow. Both came out better than the same effort would have produced two years ago.

The fast one

The platform from the workshop story is something three of us at Ateko have been building for about four weeks. It's a multi-environment monitoring tool that watches Salesforce orgs for risk: scans configuration and metadata, runs a few hundred rules, surfaces severity-rated findings with remediation guidance, and tracks health over time. Multi-environment SaaS. Real product. Real clients ahead of it.

Four weeks is fast. Conventionally, a platform of this scope and complexity is a twelve-to-eighteen month build with a multi-developer team and a six- or seven-figure budget. Anyone who's spent time around enterprise consulting builds will tell you that estimate is, if anything, conservative.

So yes, the speed-up is real. But I want to be careful here, because if I framed this post as "we built an enterprise platform in four weeks" that would be the same hype-cycle dishonesty as the thirty-minute version. It wouldn't be a lie exactly, but it would be missing the parts that matter.

What's actually in the four-week artifact:

-

A sequenced series of architecture decision records, written in real-time as the conversations happened. The next day, we'd feed the meeting transcript back into the AI and ask it what we'd captured wrong, what we'd missed, what got mentioned in passing and never made it to a file. Two layers: the live capture, and the next-morning sanity check against what the meeting actually said. When we changed our minds two weeks in (and we did), the original decision was struck through, not erased. The arc of how we got to the current design is in the file.

-

A working notes file with numbered decisions, full revision history, and the moments where pushback got captured before it could drift into "what was that thing we said?" three sessions later.

-

A test plan that was actually executed during the build, by a different AI persona than the one writing the code. Edge cases got caught while they were cheap to fix, not after a client found them.

-

Onboarding written into the repo itself, in five lines describing how the team operates: branches, what shared context lives in files versus chat, how decisions land. A new contributor reading it the morning after the workshop could pick up the thread without a meeting.

-

A "Hard Behavioral Constraints" block that names the things the platform must never do, framed as architecture not policy. Read-only access. No autonomous remediation. Compliance scope built in from week one, not added during the audit.

The thirty-minute UI rearchitecture from the workshop fits inside that. We didn't move fast because we cut corners. We moved fast because the cost of changing our minds was already low. The decisions were written down. The test plan would catch what the rearchitecture broke. The next contributor would understand both the old design and the new one. Reversibility was the precondition for the speed, and the documentation discipline is what made it reversible.

A teammate said something during that workshop that's stuck with me, and I'll paraphrase to keep it in the right vague register: a rearchitecture like that pre-AI would have been a Decision capital-D, with a meeting, a sprint commitment, team-morale insurance. Today it's something you do in a workshop while three people watch. That fluidity changes what's worth attempting in the first place. You stop optimizing for "don't change your mind" and start optimizing for "be honest about whether the design still fits." Which is, it turns out, the harder discipline.

For more on this point, the habit of pulling decisions out of conversation and into files before they evaporate, see The Conversation Is Disposable. The Context Is Not. The pattern is the same one. The platform build is what it looks like at scale.

The slow one

Going the other way: I've been building an internal project management and time-tracking platform for Ateko for two-plus months. It replaces two seasoned platforms we'd been running in parallel (a project management tool whose latest major release broke our team's core workflow, and a time-tracking system that lived in a different universe from resource planning, so practitioners spent half their time copying data between the two) plus a stack of manual processes nobody enjoyed.

It's a real platform. Resource planning, project management, time tracking, exec reports, an in-app AI assistant, ninety-one seeded users, six practice teams, a couple hundred thousand simulated time entries, status reports, time off, the whole thing. It's running in Docker right now. Not yet in production, but close.

I'm the only person building it. Which doesn't mean what it sounds like.

What it actually means: I'm running a series of stakeholder interviews with the people whose work this platform is going to change. Practitioners. Resource managers. Execs who consume the reports. The interviews get recorded and AI-transcribed, and the transcripts go into Cursor as research and planning material alongside screenshots from real-time use of the in-progress app. The build is solo. The design has half the company's fingerprints on it.

That distinction matters because the thing AI is doing here isn't replacing teammates. It's holding context across a body of input that I, working alone, could not otherwise hold in my head: hours of stakeholder conversation, weeks of design decisions, a queue of edge cases captured one screenshot at a time. The AI is the substrate that lets a one-person build absorb a multi-stakeholder design process without losing the thread.

Two months in, going on three. By the LinkedIn-version standard, that's slow. The "I built our entire PM stack in a weekend" post would write itself if I were inclined. I'm not, because that post would also be a lie, and not just because I haven't built it in a weekend.

The honest version of this build:

-

It's expensive in credits. The API spend on this project alone is more than I'd budgeted for the year of AI tooling, and the trend is up, not down. AI is not free, and the companies running their AI budget like an afterthought are about to learn that the hard way on a Q3 P&L.

-

It's comparably cheap, though, when you do the headcount math. A pre-AI build at this scope, in a consulting model, would conventionally be a three-to-five-person team for about a year. My version is some months of my time plus a credit line for AI tooling. Expensive in absolute terms, dramatically cheap relative to the alternative.

-

It's intensive. The reason it's months and not years is that I've been treating it the way I'd treat a client engagement, with concentrated time and the same scaffolding discipline as the team build above. ADRs. Working notes. Test plan written before the feature. QA mode after.

-

The artifact, again, is what's notable. Not the timeline. This thing is more thoughtfully designed than anything I would have shipped at this scope two years ago. Test coverage that I'd have skipped under deadline. Decisions documented as I made them, including the ones I later reversed. Edge cases identified by an AI persona running an adversarial review at 11 PM, when a tired solo builder would have shipped the bug.

So this one is "slow" by hype-cycle standards. Slow, expensive, intensive. It's also better than the version I would have shipped pre-AI in twice the time, with half the documentation, and a backlog of compromises I'd have rationalized as "we'll fix it later."

(For the record: I'm running this build and the team build in parallel, plus a couple of others moving at lower throttle in the background. None of them is hurting from the others' presence, because the AI is holding the context for me on each one when I'm not in it. If you've read my piece on AI and ADHD, you'll recognize this. Parallel projects without the usual cost of switching is, for my brain, the entire point.)

That's the thing the genre keeps getting wrong. The right metric isn't time-to-build. It's quality-per-unit-of-effort, and the gap between AI-augmented work and pre-AI work shows up in the second number a lot more visibly than the first.

The data, since you asked

I'm not the first person to notice this, and the receipts run in the same direction.

The METR randomized controlled trial gave experienced open-source developers real tasks in repos they'd contributed to for years, randomly allowing or disallowing AI tools. The result: developers were 19% slower with AI, but believed they were 20% faster. The perception gap between actual speed and felt speed is forty points wide, and it favors the perception.

Apollo.io's engineering team measured AI productivity across 250-plus engineers over a year and landed on 1.15x. Not 10x. Not even 2x. The strategic takeaway from that study is the line that's been pinging around my head for months: "Invest in context infrastructure: deeply engineer your codebases to be LLM-friendly." The tool isn't the differentiator. The context layer around it is.

Matt Giaro, who's written 500,000 words with AI from outside developer-land (he's a creator, not a coder), arrived at the same conclusion in his own words: "AI saves bandwidth, not time." Different domain, same finding.

What the three studies have in common: the headline productivity gain is small, and the people who report bigger gains are usually wrong about it. What also unites them, less explicitly: every team that did better than the average had invested in something upstream of the tool. Context. Documentation. Discipline. The thing the AI is sitting on top of.

This is consistent with what I'm seeing across both my builds and pretty much every project I've watched succeed. The teams that get durable value from AI aren't the ones with the cleverest prompt. They're the ones who've built scaffolding the AI can lean on, and who treat the AI as a force multiplier on whatever discipline already exists. If the discipline isn't there, you'll get faster slop. The METR result is what "faster slop" looks like when you measure it.

What to actually do

Three things, written for anyone evaluating AI tools right now, whether you're an engineer, a leader, or a non-developer who's been told AI is going to change your job:

Slow down the parts that pay back. Planning before execution. Decision capture as you make them, not as a clean-up pass after. Test design before the feature. These are the parts AI makes cheap enough to actually do consistently. Skip them and you're back to METR.

Treat AI as a multiplier on discipline that already exists. It does not create discipline. It amplifies what's there. A team that already documents and tests will produce a noticeably better artifact with AI. A team that doesn't will ship faster and at the same quality, which often means worse, because the team now has more output to be wrong about.

Front-load the cost. Investment in scaffolding and process feels slower in week one. By week three, it compounds. The teams that get the most out of AI are the ones that invested before the payoff was visible.

The frame I keep coming back to, ripped from a conversation we had on the team a couple weeks back: AI isn't velocity. It's quality at a sustainable pace. The faster-pace version exists. It's just not what's making the durable difference.

Close

The thirty-minute post isn't a lie. It's a half-truth that drops the part that matters. I have actually rebuilt UIs in thirty minutes, and the rebuild was good, and the speed wasn't the reason it was good.

Stop measuring AI by the stopwatch. Measure it by what's true at the end. If the artifact is better (better tested, better documented, better understood by the next person who reads it) the speed will sort itself out. If you're measuring time and the artifact is sloppy, you've optimized for the wrong number.

I'm building two things at once right now. One moving fast. One moving slow. They're both better than they would have been two years ago. That's the actual news. The timelines are a distraction.