A few days after I published The AI Director Protocol, an old Dalhousie classmate commented on the LinkedIn post. We took Computer Science together about twenty years ago. He runs a similar multi-agent setup on his own work: nine specialists, including a PM. But he does something I didn't. He treats the PM as disposable.

New feature? New PM conversation. Every time. His reasoning was simple: "Otherwise, I risk Claude compacting the conversation and losing crucial information."

I read that and felt the scar tissue twitch.

The day Gemini 3 ate my context

I'd been running long-lived PM conversations in Gemini for months. They held entire projects in their heads: master plans, summaries from a dozen specialist conversations, architecture decisions, deployment notes. Weeks of accumulated context per project. It all just worked.

Then Google released Gemini v3, and I kicked off the next big initiative the same way I always did. New project, fresh PM conversation, build up context with the playbook that had served me well for months. Specialist summaries in. Master plan in. Decisions captured as we went. Days of productive back-and-forth.

And then one morning I'd ask about a deployment decision from Tuesday and the AI would invent a new plan from scratch. I'd reference Alex's database schema and the PM would act like Alex was never there. The conversation that had felt rock-solid the night before now had holes in it where load-bearing context used to be.

I figured out what happened a while after the fact. The new release shipped a feature meant to reduce hallucinations: when the context window filled up, Gemini would just lop off the oldest exchanges and keep going. The intent was good. The result was the opposite. Whatever context was tied to those deleted exchanges (decisions, named personas, the reasoning behind the architecture) was now floating without a reference point. The model would reach for it, find nothing, and confidently make something up to fill the gap.

Efforts to avoid hallucinations led to even more hallucinations, coupled with a little bit of gaslighting.

The kicker is that my old projects, the ones I'd started before v3, kept working fine. They were grandfathered onto v2.5. That's why I had no early warning. The wall only ever showed up in new conversations, built the way I'd always built them.

In the old days, when a conversation got too crowded, I could ask the PM to write a "parachute prompt", a dense summary that would bootstrap a new chat. Clumsy, but it worked. With v3 you couldn't even do that. The context you'd need to write the parachute was already gone.

What I actually lost

The code was the easy part. I could look at what existed and work backwards from it.

What was harder, and in some cases impossible, was reconstructing the reasoning. Over weeks of work, the PM had quietly built up a thicket of small decisions: why we'd chosen one schema over another, why a particular endpoint was structured the way it was, why we'd ruled out an approach that on paper looked simpler. Those decisions weren't written down anywhere else. They lived in the conversation, which now meant they didn't live anywhere.

When I bootstrapped a new PM and brought it up to speed on the project, it was eager to help. It would suggest changes that contradicted things I'd settled weeks earlier, for reasons I couldn't always remember well enough to defend. I caught some. I missed others. The project started drifting away from its own history.

That's when I clued in. The single point of failure was never the PM conversation. It was the assumption that the conversation was the source of truth.

Files, not chats

The fix that worked was moving the project's brain out of the conversation and into files on disk.

I'd already started using Cursor for coding by then, and it solved this problem almost by accident. Cursor doesn't carry your project context inside a conversation thread. It carries it in the codebase: rules files, working notes, project-level configuration. When a chat ends, the context doesn't end with it. The next session opens up and the context is sitting right there in the files, ready for whatever you do next.

Cursor isn't the only tool that works this way. Claude Code, Codex, and Google's Antigravity all sit in the same family, and there are more arriving every month. The pattern matters more than the brand. Anything that treats your repo as the durable home for AI context, instead of treating the chat as the durable home, gives you what I'm describing.

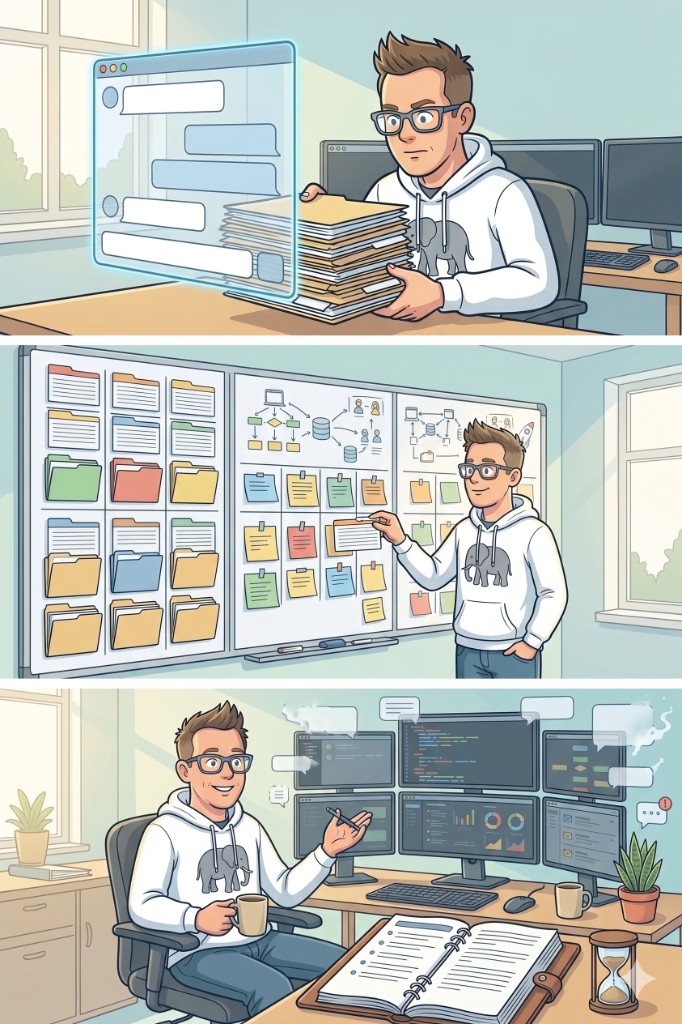

I built up a starter kit I now drop into every new project. Roughly fifteen personas (the Director Protocol cast, made reusable), a set of standing rules for how the AI should behave, and a working notes file that captures decisions and current state. Some rules are always on. Others wake up only when relevant. I can ask a security expert to look something over without re-explaining who they are or what they care about, because the file already knows.

None of this lives inside a conversation. It all lives in files. Conversations come and go. The files don't.

But the files don't take care of themselves either.

The habit that keeps it from rotting

Periodically, mid-session, I ask the AI to review the full chat and document anything new that isn't already in the project's notes or rules. Not at the end. Not when I happen to remember. Roughly every time the conversation has surfaced something I know I'm going to want next week.

The actual prompt is something like: "Review everything we've discussed in this chat. Is there a decision, a constraint, a clarification, or a trade-off that isn't in our working notes or project rules? If so, write it in."

The AI is good at this. It can scan a long thread and pick out the moments where something was decided or learned. It catches things I forgot to write down. "We chose batch processing over triggers in message 47 because of the governor limit, but that's not in the working notes." "The client confirmed the timezone requirement in message 23, and it's not documented." Then it writes them in.

This is the part nobody talks about, and it's the part that makes the rest of it work. The personas keep individual conversations focused. The rules give the AI consistent behaviour. But the periodic extract-and-document pass is what keeps the whole setup honest over time.

Why this matters more for teams

For one person working alone, the disposable-PM approach holds up fine. The context lives in your head and in your current chat. You can rebuild it when you have to.

For a team, that doesn't hold up. The context lives in one person's head and one person's chat. Nobody else can see it.

When the context lives in files instead, something shifts. A teammate pulls the project down on their machine, opens it up, and gets the same personas, the same rules, the same working notes I've got. They don't need my chat history. They don't need to ask me what I decided about the API. The answer is in the files because someone took the minute to put it there.

That changes the unit of collaboration. Instead of "let me walk you through what I discussed with the AI," it becomes "open the project, you're caught up." The AI stops being one person's tool. It becomes a shared resource that retains what the team has figured out.

I'm building a version of this for my team at work right now. The goal is that anyone on the team can open a project and get the same starting point I get, with whatever's already been figured out already written down. Onboarding cost drops to near zero, because the context is in the project, not in someone's head.

The honest limits

You have to actually do the habit. The review-and-document loop takes discipline. It's easy to get rolling on a productive conversation and forget to extract the decisions. When I let it slide, the documentation drifts from reality. Stale documentation can be worse than no documentation, because people trust it.

Not everything is worth capturing. Conversations generate a lot of noise around the signal: dead-end exploration, debugging detours, paths considered and rejected for obvious reasons. Over-document and the working notes balloon to the point where nobody reads them and the AI burns context window on stuff that isn't useful.

There's a real startup cost. Setting up the kit, writing the personas, defining the rules: ten or fifteen hours of investment before any of it pays off. For a weekend project, that's ridiculous overkill. For a team that's going to reuse the same kit across dozens of projects over years, it pays for itself many times.

Some things don't externalize cleanly. Intuitions. Hunches. The "something is off about this approach" feeling you can't quite explain. Those are real and worth listening to, but they don't always reduce to a sentence in a notes file. The files capture the explicit decisions. The implicit knowledge still lives in people.

Try it

Next time you finish a productive AI conversation, before you close the tab, try this: ask the AI to review the chat and write up the decisions, constraints, and discoveries that aren't captured anywhere durable. Then put what it gives you somewhere durable. A markdown file. A project wiki. A shared doc. Anywhere that isn't the conversation itself.

Do that three times in a row and see if you can ever close a chat again without doing it.

The conversation is the workspace. The documentation is what gets to live past it.